pixel wrote:That's where BASIC would mess around while running programs, wouldn't it? In that case no debugging ML routines within BASIC programs for the unlucky?

This does not pose any problem in practice. The two mentioned buffers have a "KEEP CLEAR!" written all over them, not primarily because of MINIMON, but because BASIC and KERNAL already use them at times which makes any data storage there

volatile for the intents of most other machine code programs: $0100..$010F is used by the BASIC interpreter during FP->ASCII conversions, the KERNAL uses $0100 up to $013F to keep an error log during tape load operations. The buffer at $0200..$0258 holds the BASIC input line.

Now, MINIMON happens to have a good idea when these two buffers can be used without treading on the shoes of BASIC and KERNAL.

It also does not hold state there that needs to be kept across machine code calls (with the G command) and re-entry (by means of a BRK instruction breakpoint).

Maybe I'm wrong as usual.

No worries. At least there's someone who reads the docs and asks questions about an important design decision that went into the construction of MINIMON.

Some time ago, I did an example debugging session with MINIMON where the operation of a customized USR() function (calling BASIC fp routines!) was watched in-situ, see here:

viewtopic.php?t=9770.

Am getting a little light headed trying to understand the BASIC/KERNAL and it fills my heart with silent joy that Commodore fired Microsoft back then.

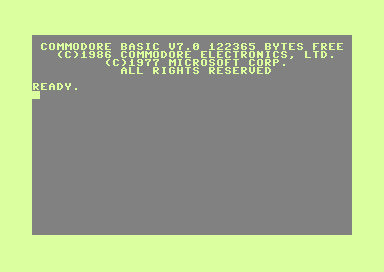

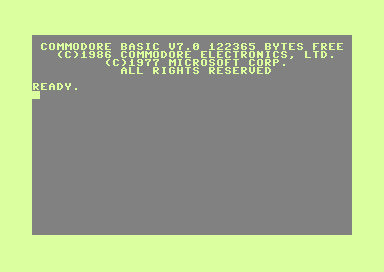

At those times, Commodore managed to get an extended licence that allowed for architectural changes to the interpreter, but it's still a Microsoft BASIC. Witness the copyright notice in the C128 start-up screen:

... so there.